The night before any interview, sparks of doubt flit through my mind: Did I set it up for tomorrow, or the next day by accident? Did I mix up a.m. with p.m.? And did I get the location right?

Thankfully, Google’s artificial intelligence has my back. Using fingerprint recognition software, I open my phone. I look briefly at the email app before tapping through to my calendar, which has been auto-populated with a meeting based on the emails I’d sent to set it up.

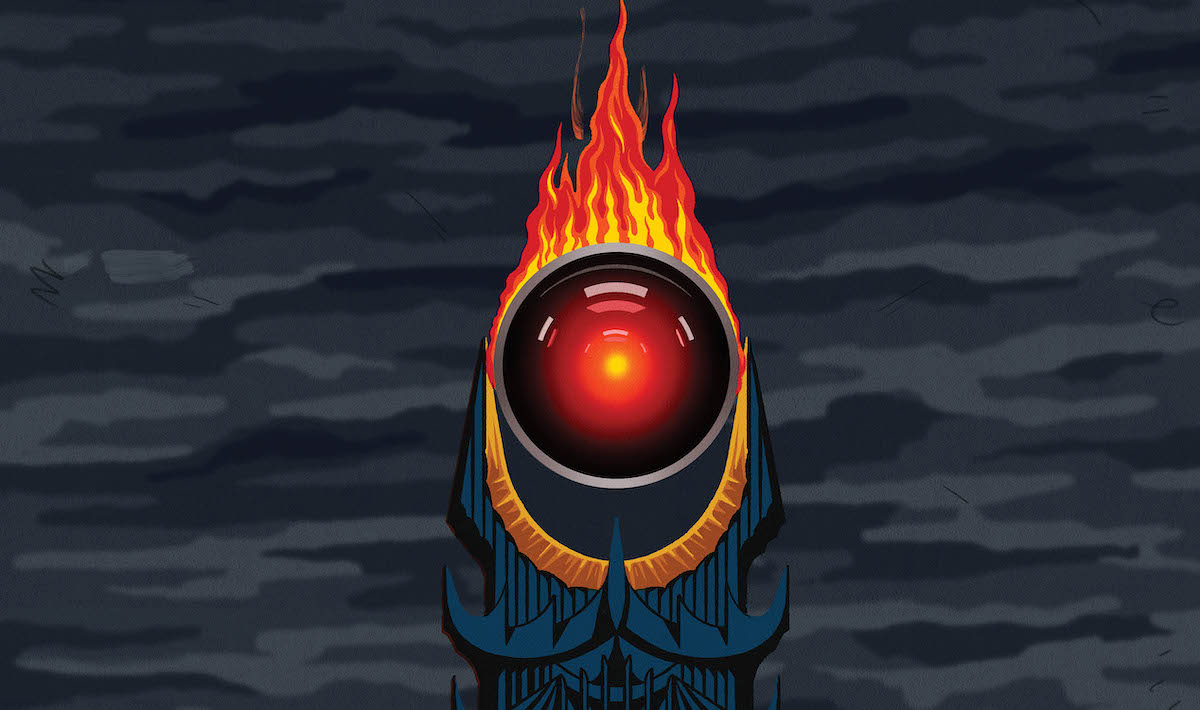

Yes, I have put all trust in our AI, high-tech overlords. While I can’t hire an assistant to schedule my meetings or prepare my interviews, AI has kept me on track, filling my calendar, guiding me through traffic and recommending useful tools and online content. Most importantly, it’s taken over tasks I likely would have otherwise bungled at one point or another.

Cam Linke, CEO of the Edmonton-based Artificial Machine Intelligence Institute (Amii), says AI affects more parts of our daily routines than most may realize — from which ad or TikTok is next shown on your feed, to the development of a new pharmaceutical treatment or food technology.

“There’s things that are kind of obvious and are very consumer, and a lot of ways behind the scenes that AI is being used to help our every-day lives that we don’t really see,” he says.

“It’s such an exciting field with so many positive ways it can have an impact on the world. Look at the areas we have a specific focus around: bio health, ag, energy — these are all really big areas, with really big, important problems that the world is facing.”

AI has never been more a part of our daily lives, nor more easily accessible.

Take, for instance, the explosive growth of technologies like ChatGPT, an AI tool that allows users to find answers to homework, write software or even tell jokes, all based on simple, natural-language prompts and responses. Within a week after its launch in November 2022, one million users had used the technology. Two months later, that number grew to over 100 million.